Tips for Redesigning Your Assessment

The following practical tips provide a starting point for educators looking to redesign their assessments in response to GenAI.

Start with the learning outcomes.

Clearly define what you are assessing — application of knowledge, critical thinking, problem solving, analytical or evaluative skills — and determine whether the use of GenAI would support or undermine those outcomes.

Be explicit about AI permissions.

Clearly communicate whether learners are permitted to use GenAI and, if so, to what extent and for which purposes. Where expectations cannot realistically be monitored or evidenced, consider redesigning the task so that learning is made visible rather than relying on unenforceable restrictions.

Balance higher-order thinking with foundational knowledge.

Design assessments that prioritise analysis, evaluation, and creation, while also ensuring that students demonstrate the essential disciplinary knowledge needed to underpin those higher-order skills — sometimes independently of AI support.

Use multi-faceted and individualised assessment formats.

Combine artefact-based tasks with lower-risk approaches such as oral presentations, peer evaluation, drafts or sketches, reflective journals, or short viva-style discussions to verify understanding and personalise learning.

Assess the learning process, not only the final product.

Build in touchpoints or staged components where each part of the assessment develops from the previous one. This might include proposals, annotated drafts, progress reflections, or interactive oral elements. These approaches make student thinking visible and strengthen assessment validity.

Incorporate structured collaboration.

Include opportunities for collaborative problem-solving, peer feedback, or group projects, with mechanisms to recognise individual contributions. Collaboration reflects authentic professional practice and makes learning less easily outsourced.

Engage students in dialogue about AI and assessment.

Facilitate open conversations about GenAI use, academic integrity, and responsible practice. Where appropriate, involve learners in aspects of assessment design — such as discussing criteria or co-creating elements of marking rubrics — in line with inclusive and UDL-informed approaches.

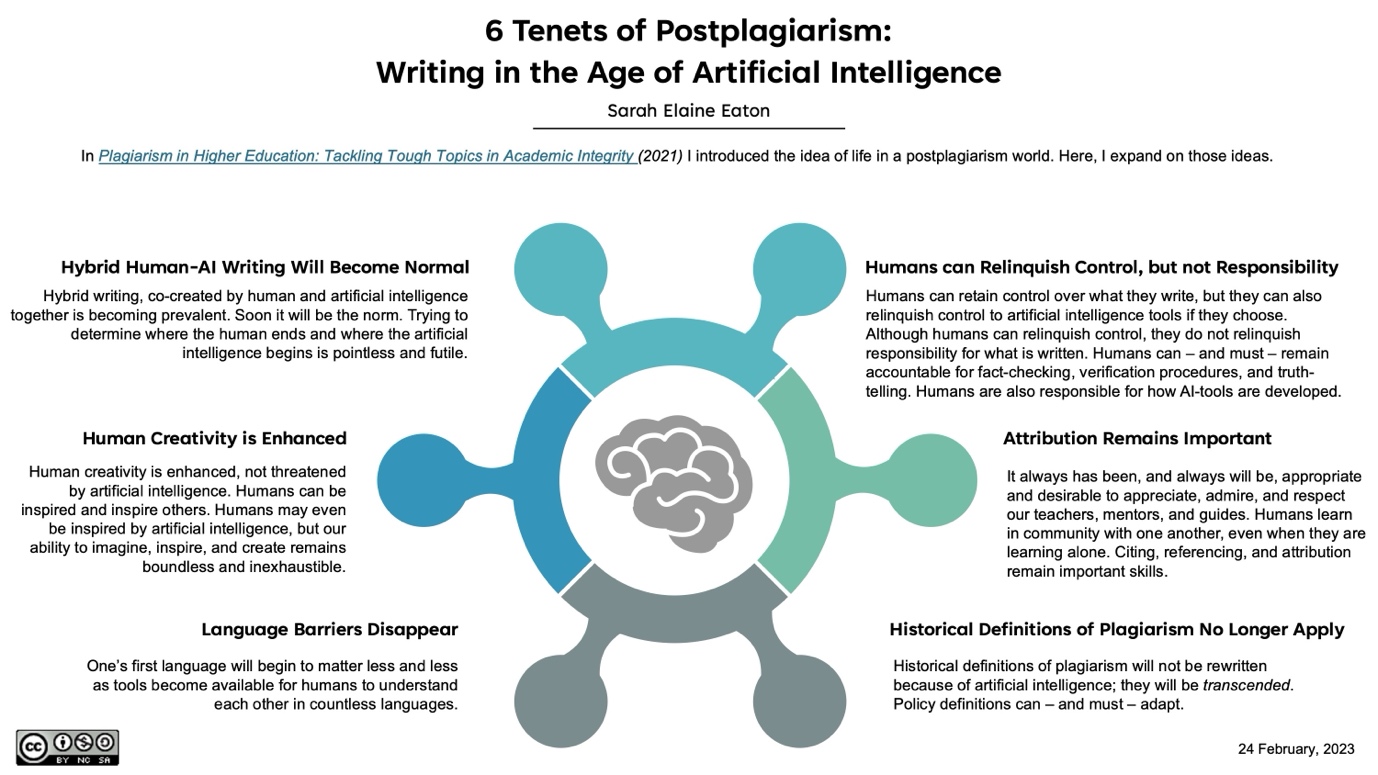

Postplagiarism

Historical definitions of plagiarism will not be rewritten because of artificial intelligence; they will be transcended

— Sarah Eaton

As AI technology continues to develop at a rapid pace, it is important to consider what the future may look like for educators. While plagiarism traditionally refers to the copying or paraphrasing someone else's work without proper attribution, 'postplagiarism' in the context of GenAI is a term that encapsulates the new challenges to academic integrity in higher education.

Key Aspects of Postplagiarism

AI-Generated Content

Students and researchers might use AI to generate essays, reports, or other academic materials. The question arises as to whether this content should be considered original or if it constitutes a form of plagiarism, especially if the use of AI is not disclosed.

Authorship and Ownership

Traditional academic work is credited to individuals based on their intellectual contribution. However, when AI plays a significant role in content creation, the lines of authorship and ownership become blurred.

Attribution

There is ongoing debate about how to attribute AI-generated content. Should students cite the AI as a source, similar to a book or article? Or is the use of AI tools similar to using a calculator or spellchecker?

Academic Integrity Policies

Updating these policies has become necessary to reflect the challenges presented by AI, to achieve a balance in encouraging the responsible use of technology with maintaining the integrity of academic work.

Ethical Considerations

The ease of generating content with AI is tempting for students and researchers to submit work they didn't meaningfully engage with or understand.

While there are significant challenges, there are also opportunities to develop innovative approaches and embrace new mindsets.

Conclusion

GenAI does not diminish the value of assessment; it sharpens our understanding of why assessment matters. In an AI-enhanced world, assessment is no longer simply a mechanism for measuring knowledge, but a means of making learning visible and revealing how students think, apply, question, create, and grow.

Redesigning assessment in response to GenAI requires informed, reflective, and collaborative engagement from educators. It also requires institutional support, space for professional development, and recognition of the emotional and cognitive load that rapid technological change can bring. Equity, access, and ethical considerations must remain central.

This framework affirms that sustainable academic integrity is achieved not through surveillance or detection alone, but through thoughtful, structurally sound assessment design, the development of AI literacy, and a culture that values learning processes as much as outcomes.

Initially developed as part of the N-TUTORR national project GenAI:N3, this framework is a living resource that continues to evolve. The approaches outlined here can be adapted, combined, and expanded in response to disciplinary needs, emerging research, and evolving technologies.

Many thanks to reviewers Dr Jim O'Mahony, MTU and Damien Raftery, SETU.

Reading List

- Artificial Intelligence Act, “EU Artificial Intelligence Act | Up-to-date developments and analyses of the EU AI Act, https://artificialintelligenceact.eu/

- Bassett, M. A., Bradshaw, W., Bornsztejn, H., Hogg, A., Murdoch, K., Pearce, B., & Webber, C. (2026). Heads we win, tails you lose: AI detectors in education. Journal of Higher Education Policy and Management, 1–16. doi:10.1080/1360080X.2026.2622146

- Chan, C.K. and Colloton, T. (2024) 'Redesigning assessment in the AI era', Generative AI in Higher Education, pp. 87–126. doi:10.4324/9781003459026-4.

- Corbin, T., Dawson, P., & Liu, D. (2025). Talk is cheap: why structural assessment changes are needed for a time of GenAI. Assessment & Evaluation in Higher Education, 50(7), 1087–1097. doi:10.1080/02602938.2025.2503964.

- Corbin, T., Bearman, M., Boud, D., & Dawson, P. (2025). The wicked problem of AI and assessment. Assessment & Evaluation in Higher Education, 1–17. doi:10.1080/02602938.2025.2553340.

- Curtis, G. J. (2025). The two-lane road to hell is paved with good intentions: why an all-or-none approach to generative AI, integrity, and assessment is insupportable. Higher Education Research & Development, 44(8), 2151–2158.

- Dawson, P. (August 2024) 'How to make a 100% accurate AI detector: or why we need specificity in discussions of AI detector accuracy'.

- Dawson, P., Bearman, M., Dollinger, M., & Boud, D. (2024). Validity matters more than cheating. Assessment & Evaluation in Higher Education, 1–12. doi:10.1080/02602938.2024.2386662.

- Deep, P. D., (2025). Evaluating the effectiveness and ethical implications of AI detection tools in higher education. Information, 16(10), 905. doi:10.3390/info16100905.

- Dietis, N. (2025, March 25). AI in Higher Education: Mapping Key Guidelines and Recommendations. European Commission Futurium.

- Farrell, H. & McCarthy, K. (2025). Manifesto for Generative AI in Higher Education: A living reflection on teaching, learning, and technology in an age of abundance. GenAI:N3, South East Technological University https://manifesto.genain3.ie/

- Furze, L. (2024) Practical AI strategies: Engaging with Generative AI in Education. Melbourne, Australia: Amba Press.

- Hartley, P. et al. (2024) 'Using generative AI effectively in Higher Education'. doi:10.4324/9781003482918-1.

- International College of Management Sydney (2024): Artificial Intelligence in Education (AIED) Framework.

- Kim, H.N. (2025). Detecting the Undetectable: The Need for a New Paradigm for Academic Writing Evaluation in the AI Era. doi:10.1007/978-3-031-94153-1.

- Liu, D. and Bridgeman, A. (June 2023) 'ChatGPT is old news: How do we assess in the age of AI writing co-pilots?'

- Liu, D. and Bridgeman, A. (Dec. 2023) 'Embracing the Future of Assessment at the University of Sydney'.

- Liu, D. and Bridgeman, A. (July 2024) 'Frequently asked questions about the two-lane approach to assessment in the age of AI'.

- Lodge, J. M., Howard, S., Bearman, M., Dawson, P, & Associates (2023) 'Assessment reform for the age of Artificial Intelligence'. TEQSA.

- NAIN AI guidelines (2023): NAIN Generative AI Guidelines for Educators.

- Newton, P. M., & Draper, M. J. (2025). Widespread use of summative online unsupervised remote (SOUR) examinations in UK higher education. Quality in Higher Education, 31(1), 127–141. doi:10.1080/13538322.2025.2521174.

- O'Sullivan, James, Colin Lowry, Ross Woods & Tim Conlon. AI in Higher Education Teaching & Learning: Policy Framework. Higher Education Authority, 2025. doi.org/10.82110/073e-hg66

- Perkins, M., Roe, J., Furze, L. (2025) 'Reimagining the Artificial Intelligence Assessment Scale'. Journal of University Teaching and Learning Practice, 22(7). doi:10.53761/rrm4y757.

- Suhail, A.H., Guangul, F.M. and Nazeer, A. (2024) 'Evolution of assessment and feedback methods in Higher Education'. doi:10.4018/979-8-3693-2145-4.ch003.

- TEQSA guidelines (Nov. 2023): Assessment reform for the age of artificial intelligence.

- UNESCO. 2021. Recommendation on the Ethics of Artificial Intelligence. Paris: United Nations Educational, Scientific and Cultural Organization. https://unesdoc.unesco.org/ark:/48223/pf0000381137.

- Upsher, R. et al. (2024) 'Embracing generative AI in authentic assessment'. doi:10.4324/9781003482918-16.

- Various articles — 'AI and Assessment in Higher Education', Times Higher Education Spotlight.

- Virmani, S. and Lau, A. (June 2024) 'Balancing impact and time: A strategy for adapting assessments in the age of GenAI', The SEDA Blog.