Integration Factors

The incorporation of GenAI into the assessment process can enhance the learning experience and contribute towards establishing more engaging and authentic practices. However, it is also important to determine whether the integration of GenAI into your assessment is appropriate.

The Artificial Intelligence in Education (AIED) Framework developed by the International College of Management in Sydney, recommends consideration of the following factors:

Educational Reasoning

If students are asked to demonstrate their understanding, critical thinking skills, or ability to apply knowledge independently, relying heavily on AI could undermine the intended learning outcomes.

The Nature of the Task

If the task aims to assess a student's writing proficiency, using GenAI to produce the written content would make this impossible. In contrast, if the task is focused on exploring AI capabilities or understanding its applications, the use of GenAI may be appropriate.

The Function of the Task

If a student's mastery of specific concepts or their ability to solve complex problems are being assessed, relying on AI could potentially hinder the accurate evaluation of their skills and knowledge.

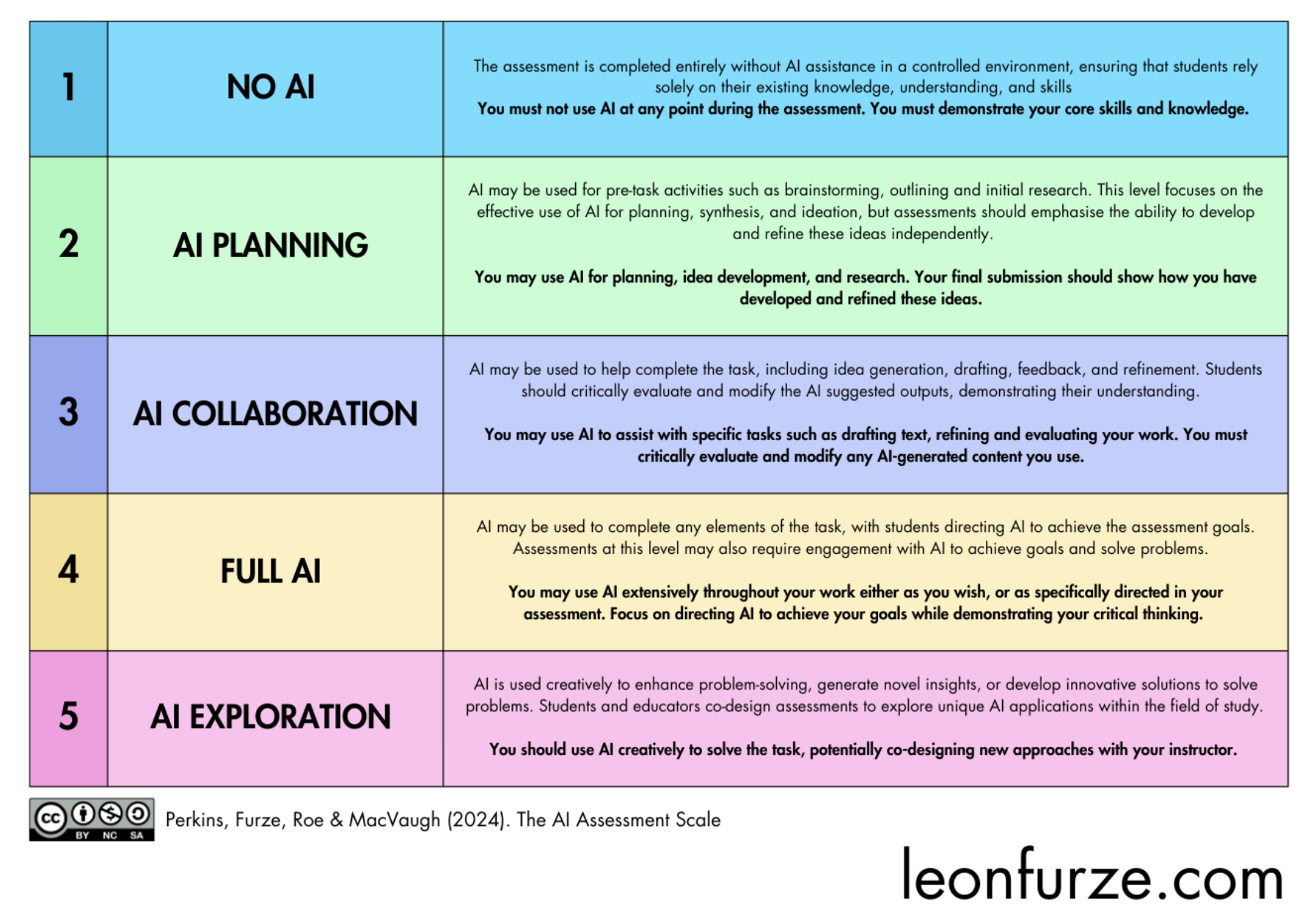

The AI Assessment Scale

The following scale developed by Leon Furze et al. outlines the varying levels of GenAI integration possible in the assessment process. The different levels may be adopted depending on a range of factors including: the discipline; the nature of the assessment; the purpose of the assessment; and the intended learning outcomes.

Note: The Leon Furze AI Assessment Scale is a visual reference tool. Please refer to Furze, L. (2024) 'Practical AI strategies: Engaging with Generative AI in Education' for the full framework.

The Two-Track Approach

This is further simplified by Liu and Bridgeman (2023) at the University of Sydney as two clear assessment tracks are identified:

Track 1 — Secured

AI-RestrictedAI use is typically not permitted unless the ethical use of an AI tool is purposefully being assessed. The focus is on 'assessment of learning'. These assessments are supervised, and unauthorised use of AI is considered a breach of academic integrity.

Track 2 — Open

AI-IntegratedResponsible use of AI is encouraged. These assessments are less supervised, promoting engagement with AI and preparing students for an AI-integrated society. Acceptable AI-usage is clearly detailed in the Assessment Briefs and any unauthorised use of AI outside of this is considered a breach of academic integrity.

The incorporation of both tracks is viewed as a positive step in creating a balanced assessment environment where foundational knowledge and critical thinking skills remain relevant, while also focusing on authentic assessments. It is suggested that most assessments should fall into Track 2 as we prepare our learners for future careers in an increasingly AI-enhanced landscape.

Structural Redesign vs Discursive Control

More recently, discussions have emerged around the enforceability of such models and a distinction is emerging between discursive control (rules and warnings) and structural redesign (changing the mechanics of assessment). Structural redesign provides more sustainable integrity solutions. (Corbin, Dawson, Liu, 2025)

| Approach | Focus | Limitation / Opportunity |

|---|---|---|

| Discursive control | Rules, warnings, AI bans | Relies on student compliance |

| Structural redesign | Changing the mechanics of assessment | Builds validity into the task itself |

Focusing on the Process

Process-based assessments emphasise how learners arrive at conclusions, not just final products. This type of assessment is particularly valuable in preparing students for complex, real-world challenges where the journey is just as important as the destination.

Requiring evidence of the learning process can provide deeper insight into the level of understanding, reasoning processes, and ability to apply knowledge. It also makes learning visible and reduces reliance on surveillance approaches.

The learning process can be revealed through a variety of means including reflective journals, drafts or sketches, staged assessments, and iterative feedback cycles.

- Increased opportunities for peer learning, collaboration, and teacher-student interaction

- Development of self-awareness of their own strengths and weaknesses

- Enhanced student engagement and deep learning

- Development of critical thinking and problem-solving skills

- Reduced pressure on a final outcome

- Promotion of a culture of transparency and integrity

Process-focused assessment provides an alternative to surveillance-based integrity approaches. By making learning visible through drafts, checkpoints, oral interactions, and reflective commentary, educators create evidence of authentic engagement without depending on unreliable AI detection tools.