Assessment redesign in response to GenAI cannot be addressed solely at the level of individual modules. To ensure fairness, coherence, and academic standards, assessment strategy must be considered holistically at programme level and embedded within existing quality assurance processes.

1. Programme-Level Assessment Mapping

Programme teams should review assessment patterns across all stages to ensure a balanced and purposeful mix of assessment types. This includes:

- A combination of AI-restricted assessments (where independent knowledge, foundational skills, or professional competencies must be demonstrated without AI support)

- AI-integrated assessments (where responsible use of AI is aligned with learning outcomes and mirrors authentic professional practice)

- Increasing emphasis on process-based and staged assessments that make learning visible over time

This mapping helps avoid:

- Over-reliance on a single vulnerable format (e.g., multiple long essays)

- Inconsistent expectations around AI use between modules

- Unintended clustering of high-stakes, high-risk assessments

Programme-level planning ensures that students experience a coherent assessment journey, rather than a collection of disconnected module-level decisions.

2. Alignment with Learning Outcomes and Graduate Attributes

Assessment redesign must remain in alignment with programme learning outcomes and graduate attributes, including emerging expectations around AI literacy.

Programme teams should consider:

- Where AI literacy is an explicit programme outcome

- Where AI literacy supports broader attributes such as critical thinking, ethical reasoning, and digital competence

- Where certain competencies (e.g., clinical judgment, foundational theory, professional standards) must be demonstrated without AI mediation

This ensures that decisions about AI use in assessment are pedagogically driven, rather than reactive or technology-led.

3. Consistency, Transparency, and Student Experience

From a quality perspective, students should encounter clear and consistent messaging about AI use across their programme.

This includes:

- Shared programme-level language for describing levels of permitted AI use

- Clear explanation of the rationale for AI-restricted vs AI-integrated tasks

- Opportunities for students to develop the skills needed to engage ethically and effectively with AI before being assessed on them

Inconsistent practices between modules can create confusion, inequity, and perceptions of unfairness. Programme-level coordination supports a transparent and supportive assessment culture.

4. Embedding Assessment Redesign into Quality Processes

Assessment redesign should be incorporated into existing quality assurance and enhancement structures, such as:

- Programme review and revalidation processes

- Annual monitoring and reporting

- External examiner discussions on assessment standards and authenticity

- Curriculum design and approval workflows

Rather than being treated as an emergency or temporary measure, AI-responsive assessment should be recognised as part of ongoing curriculum enhancement in a changing technological landscape.

5. Supporting Staff Through Structured Programme Approaches

Programme-level coordination also reduces the burden on individual lecturers by:

- Encouraging shared design approaches

- Facilitating cross-module collaboration

- Enabling collective decisions about where AI-restricted assessment is most appropriate

- Sharing effective practices in process-focused and authentic assessment

This creates a more sustainable model of change and helps ensure that assessment redesign enhances learning, rather than simply increasing staff workload.

Time Investment

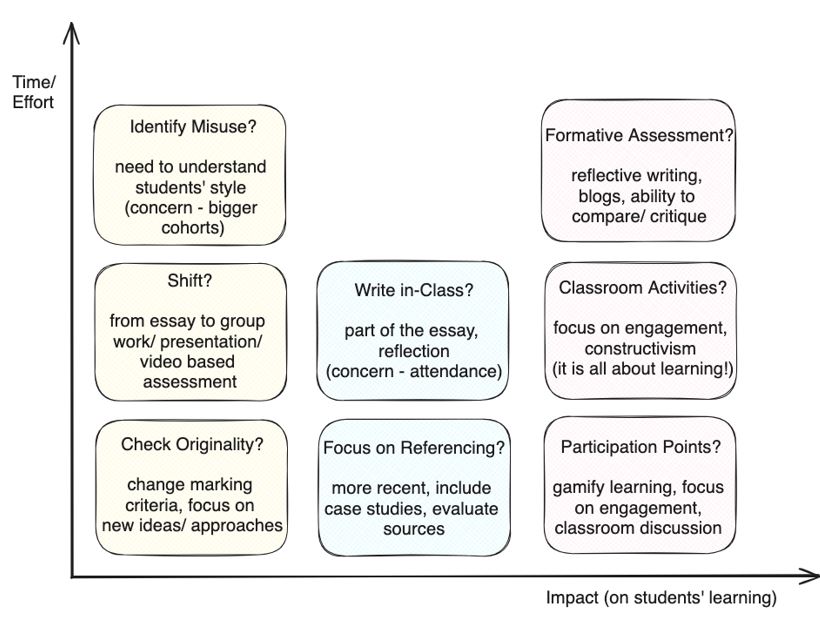

A valid consideration in assessment redesign is balancing the amount of time and effort educators will need to invest in this process versus the level of impact on the learning experience.

Virmani and Lau address this in their SEDA blog (June 2024) and offer the following as a starting point for discussion:

Horizontal Axis (Impact)

Measures the degree to which an assessment format influences positive changes in student learning outcomes.

Vertical Axis (Time)

Indicates roughly how much time and effort educators would invest in modifying or redesigning a particular assessment format.

Ideal Zone

High in impact yet lower in the time required for modification — techniques that balance efficiency with effectiveness.

Exploring assessment approaches that deter cheating in the first place rather than focusing on detection after the fact, can be useful in terms of saving time. However, there is no single 'perfect' solution, and the most effective approach will depend on your specific context, learning objectives, and available resources.

Institutional and Wider Supports

Sustainable assessment redesign in the age of GenAI cannot rely on individual educators working in isolation. Institutions have a responsibility to create the conditions that enable valid, fair, and future-facing assessment practices.

At institutional level, educators should be supported through:

- Structured professional development in AI literacy, assessment redesign, and ethical AI use

- Facilitated cross-disciplinary dialogue to share practice, align approaches, and reduce duplication of effort

- Development of unified institutional guidance on AI use in learning, teaching, and assessment

- Accessible repositories of assessment exemplars and resources to support redesign efforts

- Agile curriculum processes that allow timely updating of assessment strategies

Clear, practical AI policies and guidelines are essential, not only to define acceptable use but to promote transparency, fairness, and consistency across programmes.

Beyond individual institutions, engagement with the national and international conversation around assessment and AI is equally important. Sectoral initiatives such as QQI's Rethinking Assessment, the academic integrity work of NAIN, and national coordination through the National Forum for the Enhancement of Teaching and Learning in Higher Education demonstrate a growing shared commitment to evolving assessment in responsible and evidence-informed ways.