Introduction

Since the launch of ChatGPT in November 2022 and subsequent surge in Generative Artificial Intelligence (GenAI) technology, the education sector has been impacted significantly, with efforts to develop policies, strategies, and guidelines to support staff and students in navigating the changing landscape. While these technologies offer great potential for enhancing learning experiences, they also pose significant challenges to academic integrity.

Assessment methods such as essays, unsupervised open-book or remote exams, and online quizzes, are increasingly vulnerable, as students can access AI tools to produce content that appears to be original but is not their own work.

Although AI detection tools have emerged, their reliability remains limited, and they cannot serve as definitive evidence of academic misconduct. The focus therefore shifts from detection to prevention, with emphasis on authentic learning experiences. This presents an urgent need for higher education institutions to reconsider their assessment strategies to uphold academic standards and ensure that assessments accurately reflect students' knowledge and skills.

The Evolving Policy Context

Assessment redesign is not solely a pedagogical issue but also a governance, quality, and ethical responsibility. National and international bodies now emphasise that assessment practices must remain robust when AI is increasingly accessible.

Key Developments

- National guidance encouraging AI literacy, transparency, and equitable access

- UNESCO's Recommendation on the Ethics of AI, which emphasises human oversight, inclusion, and accountability

- The evolving EU AI Act, which highlights education as a high-impact context requiring transparency and risk mitigation

These developments reinforce the central argument of this framework: assessment must be redesigned structurally, rather than focusing on detection.

Objectives

The goal of assessment redesign is to develop robust, fair, valid, and educationally meaningful approaches that ensure students can demonstrate genuine learning, understanding, and capability.

- Rather than focusing solely on preventing misuse of AI tools, assessment should be designed to make learning visible

- Incorporating a diverse range of assessment types, balancing formative and summative approaches

- Greater emphasis on process, reasoning, and development over time

- Redesigning assessment presents challenges, including time constraints, large class sizes, and evolving institutional expectations

- Some assessments may appropriately integrate AI while others may require independent demonstration

- The central guiding principle remains the alignment of assessment with programme and module learning outcomes

Scope

This framework supports educators and programme teams in rethinking assessment design in the context of GenAI.

The framework supports educators to:

- Consider the purpose of each assessment and determine whether GenAI use supports or undermines the intended learning outcomes

- Identify assessment types that may be particularly vulnerable to inappropriate AI use and prioritise these for structural redesign

- Explore assessment approaches in collaboration with discipline-area colleagues

- Consider where and how GenAI can be meaningfully integrated into assessment

- Ensure coherence across modules and stages, contributing to a balanced programme-level assessment strategy

This framework does not prescribe a single solution, but offers principles and strategies that can be adapted to disciplinary contexts, institutional policies, and evolving technological and regulatory environments.

Reconsidering the Purpose of Assessment

In an educational landscape where AI has become so embedded, reconsidering the purpose of assessment becomes imperative to foster a more meaningful and authentic learning experience.

- Focus should shift towards assessing higher-order thinking skills, such as critical analysis, creativity, and problem-solving

- Assessments can better reflect real-world applications and prepare students for the modern workforce

- Students are evaluated not just on what they know, but on how they think and adapt

- The redefined purpose should aim to cultivate lifelong learners equipped with skills to navigate and innovate in an AI-driven world

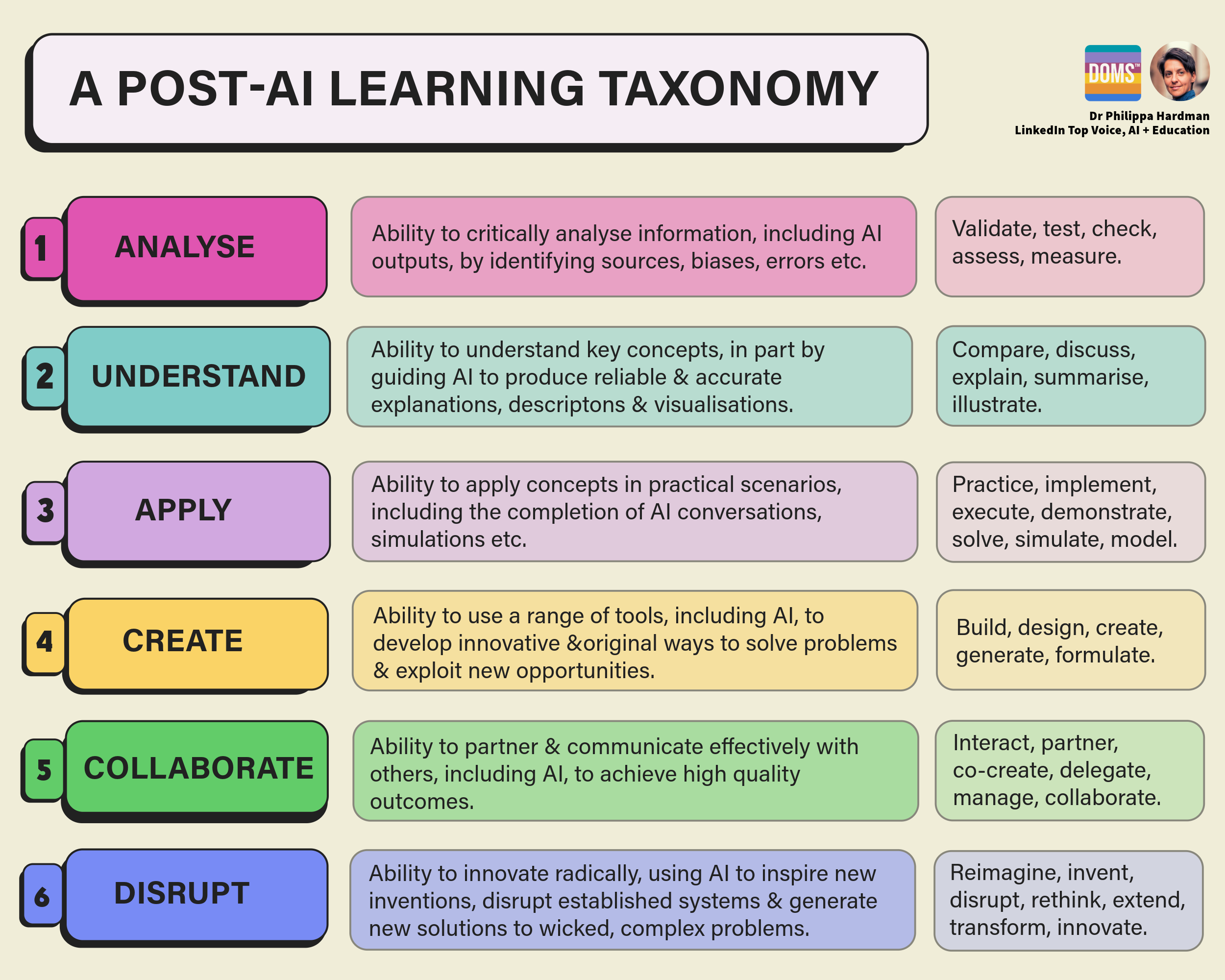

Phillippa Hardman has suggested alternatives to Bloom's Taxonomy that focus on skills AI "enhances rather than replaces," encouraging educators to prioritise competencies where human judgement, creativity, and contextual reasoning remain essential.

Phillippa Hardman